Infrastructure Precedes Autonomy.

The Agent-Ready Methodology

The Empirical Framework for Controlled Enterprise Autonomy.

Deploying autonomous AI systems on an immature, fragmented data foundation is an explicit operational liability. Based on extensive doctoral research into cloud implementation failures , our firm utilizes a rigid, six-part maturity framework to transform chaotic data environments into high-containment environments built for safe automation.

The following frameworks define the structural conditions required for safe, scalable enterprise autonomy.

From Cloud BI to the Agentic Enterprise.

The Six Control Matrix Pillars

Strategic Alignment

The Failure: Speculative AI experimentation disconnected from measurable fiscal outcomes.

The Mandate: Locking model capabilities directly to defined business vectors and risk thresholds.

Governance & Resource Discipline

The Failure: Open-ended autonomous execution creating volatile compute billings.

The Mandate: Embedding automated cost governors directly into pipeline orchestration layers.

Technical Architecture Integrity

The Failure: Building AI tools on top of fragile, un-normalized, or undocumented schemas.

The Mandate: Enforcing 3NF or dimensional rigor at the physical storage layer to minimize query depth.

Semantic Trust & Metric Codification

The Failure: Algorithmic amplification because different business units have conflicting metric definitions.

The Mandate: Locking metric definitions inside a central, machine-interpretable semantic layer.

Access Segregation & Propagation

The Failure: AI models bypassing corporate permissions or exposing sensitive data tables.

The Mandate: Enforcing identity-aware access controls down to the retrieval pipeline and row levels.

Traceability & Audit Infrastructure

The Failure: Blind execution loops where autonomous actions cannot be verified or reversed.

The Mandate: Mapping absolute lineage trace records for every single machine-generated transaction.

Evolution Toward Autonomy

Cloud BI

↓

Governed Cloud BI

↓

Semantic Hardening

↓

Controlled Autonomy

↓

Agentic Enterprise

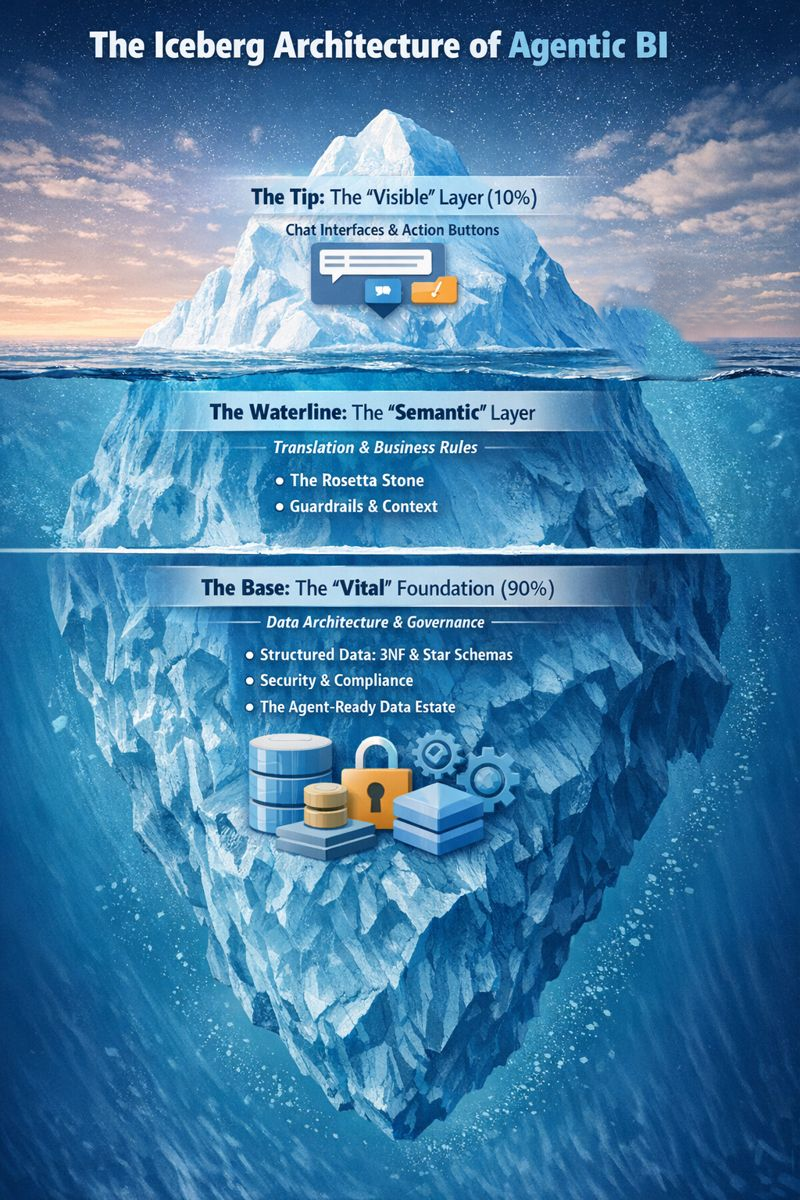

The Iceberg Architecture

Maturity is visible at the surface. Architecture determines what survives below it.

Autonomy is visible. Governance is structural.

Most organizations focus on the visible layer of AI —

interfaces, copilots, and action buttons.

But beneath every agent lies a deeper architecture.

The Iceberg Model separates three structural layers:

1. The Visible Layer (10%)

Chat interfaces and action triggers.

2. The Semantic Layer

Business rules, translation logic, context control, lineage.

3. The Structural Foundation (90%)

Data modeling discipline

Security segmentation

Observability and audit controls

Governance enforcement

Autonomy does not amplify reporting risk.

It amplifies architectural risk.

An AI agent is only as trustworthy as the semantic layer it queries

The Iceberg Architecture

Structure must be translated into operating discipline.

Agent Ready Data Estate

Containment architecture for enterprise autonomy.

Agentic systems do not fail because of intelligence gaps.

They fail because of architectural instability.

Executive Brief: The Agent-Ready Data Estate →

The Agent-Ready Data Estate defines the structural conditions required for safe autonomy.

It consists of four non-negotiable disciplines:

1. Schema Discipline

Well-modeled, governed data structures. No semantic ambiguity. No undocumented joins.

2. Semantic Integrity

Business logic encoded in shared translation layers. Clear definitions, lineage, and context boundaries.

3. Segmentation & Policy Enforcement

Row-level controls. Role-based access. Security implemented as architecture — not approval workflows.

4. Observability & Auditability

Complete traceability of decisions, queries, and transformations. Agents operate inside monitored containment zones.

Autonomy requires containment. Containment requires architecture. Architecture defines stability. Runtime governance prevents escalation.

An Agent-Ready Estate is built below the waterline.

Runtime Governance Model

The Circuit Breaker Protocol

Fail-safe containment architecture for autonomous systems.

When AI agents exceed policy boundaries, generate anomalous behavior, or encounter semantic instability, they must not escalate risk.

The Circuit Breaker Protocol defines automated containment triggers that:

Suspend execution

Revert to safe state

Log decision lineage

Escalate to human review

Autonomy without interruption controls is systemic risk.

Executive Brief: The Circuit Breaker Protocol →

Ready to operationalize safe autonomy?

If you are moving from dashboards to agentic systems, the first step is validating the architecture below the waterline.